This blog post is about how to leverage some of the vast capabilities of the AWS cloud to create new serverless environments on the fly to host web applications. Specifically, we’ll be looking at a combination of:

- Lambda – to host the code returned by an ExpressJS app. This will be one flavor of a “serverless server-side rendered” React front-end

- S3 – to store the assets generated by running a Webpack build

- API Gateway – to handle the routing logic when making requests to the site (for example, any requests made to /assets/* will go to the S3 bucket, while everything else goes to the Lambda function)

- CloudFormation – to templatize the resources listed above so that they can be created via an API call, instead of manually clicking buttons in the AWS Console

- CodeBuild – to tie everything together. This service will start a new build every time there are new commits made to a Github repository. Each branch will be used to identify the new resources generated by the CloudFormation stack.

Before we begin, you may be asking yourself what on earth is a dynamic serverless environment. Let’s start out with covering those three words in reverse order. By “environment”, I’m referring to a deployment environment that source code can be deployed to in order to make a website (the application) accessible. Throughout this post, I’ll keep it relatively simple enough so that there are not too many moving parts to each environment. We’ll only be dealing with a static ReactJS application – no authentication, no connections to databases, no POST requests, etc. Everything from the frontend is essentially static. But the “dynamic” part refers to the creation of new environments as new commits are made. And I am calling this serverless due to the use of AWS Lambda to host some of the backend services. There is an ExpressJS “server” that changes behavior based on whether we’re running it in development mode (locally) or production mode (on the remote environment). Amazon made a nifty npm module called aws-serverless-express that allows the Express app and the Lambda function to integrate with each other seamlessly.

The intention of this is to come up with a possibly viable way to develop large applications with multiple developers in an efficient manner while making the differences between individual changes easy to spot. For example, when viewing pull requests on sites like Github or Bitbucket, the diffs are nicely color coded such that lines in red were removed and lines in green were added. But to compare how those differences affect the web application as a whole sometimes requires manual verification on an actual environment with the specific branch in place.

One great example of that concept in action is Git Integrations by Vercel (formerly known as Zeit) – that allows for “automatic deployments on every branch push”. Another similar service (admittedly the only other one like this that I’m familiar with) is Gitlab Review Apps. I found out about these two sometime last year, and ever since then I’ve wanted to build a system like that myself!

My goal here is to provide a comprehensive guide detailing exactly the steps necessary to set up the dynamic serverless environments system on any AWS account.

To start with, I’ll create a Github repository to host my application’s source code. The app will be “Guacchain – guacamole on the blockchain”. Inside my terminal I need to make a new directory and get it ready to install npm packages:

mkdir guacchain && npm init -y && npm i -S aws-serverless-express body-parser cors express path react react-dom react-router-dom && npm i -D @babel/cli @babel/core @babel/plugin-proposal-export-default-from @babel/plugin-syntax-dynamic-import @babel/preset-react @babel/preset-env @babel/register assets-webpack-plugin babel-loader babel-plugin-syntax-dynamic-import babel-plugin-transform-react-remove-prop-types clean-webpack-plugin@1 css-loader file-loader eslint eslint-config-standard eslint-plugin-import eslint-plugin-node eslint-plugin-promise eslint-plugin-react eslint-plugin-standard html-webpack-plugin mini-css-extract-plugin react-dev-utils webpack webpack-cli

The project’s folder structure will look like this:

/scripts

buildServer.js

deployLambdaFunction.sh

s3AssetsDeploy.sh

/src

/components

/pages

About.js

Home.js

Subscribe.js

App.jsx

client.js

dev.server.js

index.js

prodserver.js

server.js

webpackAssets.json

.babelrc

.eslintignore

.gitignore

index.js

package-lock.json

package.json

webpack.config.jsStarting with the files inside src/components/pages folder:

Home.js:

import React from 'react';

import './App.css';

import guacIMG from './guac.jpg';

const App = () => (

<div>

<p>Guac Chain</p>

<p>Freshly made guacamole, on the blockchain...</p>

<img id="guac" src={guacIMG} height={282} width={300} alt="guac" />

</div>

);

export default App;

`guac.jpg` and `App.css` files must exist in the same directory, and here is that css file:

@keyframes myanimation

{

from {

left: 0;

}

to {

left: 100%;

}

}

img#guac {

position: absolute;

animation: myanimation infinite 5s linear;

}About.js:

import React from 'react';

const About = () => (

<div>

<h1>About</h1>

</div>

);

export default About;Subscribe.js:

import React from 'react';

const Subscribe = () => (

<div>

<h1>Subscribe</h1>

</div>

);

export default Subscribe;Now within the `src/components` folder, the Header.jsx file can look like this:

import React from 'react'

import { Link } from 'react-router-dom'

const headerStyle = {

width: '100%',

minHeight: 60,

borderBottomWidth: 1,

borderBottomColor: 'black',

borderBottomStyle: 'solid',

display: 'flex',

flexDirection: 'row',

alignItems: 'center',

backgroundColor: 'lightgray'

}

const aTagStyle = {

display: 'flex',

flex: 1,

alignItems: 'center',

justifyContent: 'center',

height: 60

}

const Header = () => (

<div style={headerStyle}>

<Link to="/" style={aTagStyle}>Home</Link>

<Link to="/about" style={aTagStyle}>About</Link>

<Link to="/subscribe" style={aTagStyle}>Subscibe</Link>

</div>

)

Header.displayName = 'Header'

export default HeaderAnd for the App.jsx in that same directory:

import React from 'react'

import { Route } from 'react-router-dom'

import Header from './Header';

import Home from './pages/Home'

import About from './pages/About'

import Subscribe from './pages/Subscribe'

const App = () => (

<div>

<Header />

<div>

<Route exact path="/" component={Home} />

<Route exact path="/about" component={About} />

<Route exact path="/subscribe" component={Subscribe} />

</div>

</div>

)

App.displayName = 'App'

export default AppMoving onto the `src` folder, the server.js file will contain the code instantiating the ExpressJS app and its handler function for returning an HTML template string that has the React JSX content rendered inside of it:

import express from 'express'

import path from 'path'

import React from 'react'

import { renderToString } from 'react-dom/server'

import { StaticRouter } from 'react-router-dom'

import App from './components/App'

import webpackAssets from './webpackAssets.json'

const app = express()

app.use(express.static(path.resolve(__dirname, '../public')))

export const mainHandler = (req, res) => {

const jsx = <StaticRouter location={req.url}><App /></StaticRouter>

const reactDom = renderToString(jsx)

res.writeHead(200, { 'Content-Type': 'text/html' })

const responseStr = htmlTemplate(reactDom)

res.end(responseStr)

}

const assetsArray = Object.keys(webpackAssets).map(key => webpackAssets[key].js).filter(asset => asset)

export const htmlTemplate = (reactDom) => {

return `

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8">

<title>Guacchain</title>

</head>

<body>

<div id="app">${reactDom}</div>

${assetsArray.map(filePath => `<script src="${filePath}"></script>`).join('')}

</body>

</html>

`

}

export default appHere we are utilizing react-router’s StaticRouter component which is useful in server-side rendering scenarios. The `webpackAssets.json` file is also referenced here, but it doesn’t exist yet as it will get generated by an `npm start` command to be created soon.

The prod.server.js file in this directory is intended to be used by the Lambda function once it is deployed. It has a middleware package the lets requests to the Lambda function get handled by the express app:

import app, { mainHandler } from './server';

const express = require('express')

const bodyParser = require('body-parser')

const cors = require('cors')

const awsServerlessExpressMiddleware = require('aws-serverless-express/middleware')

const awsServerlessExpress = require('aws-serverless-express')

const router = express.Router()

router.use(cors())

router.use(bodyParser.json())

router.use(bodyParser.urlencoded({ extended: true }))

router.use(awsServerlessExpressMiddleware.eventContext())

router.get('/*', mainHandler)

app.use('*', router)

const server = awsServerlessExpress.createServer(app)

exports.handler = (event, context) => {

awsServerlessExpress.proxy(server, event, context)

}And for the last file inside the `src` directory, `client.js`:

import React from 'react'

import { hydrate } from 'react-dom'

import { BrowserRouter } from 'react-router-dom'

import App from './components/App'

const app = document.getElementById('app')

// Use hydrate instead of render to attach event listeners to existing markup

hydrate(<BrowserRouter><App /></BrowserRouter>, app)Here is where the React hydrate function is used to transform the HTML string given back to us by the ExpressJS server into a living, breathing React application by attaching the event listeners.

To continue with the remaining files left, here is the `.babelrc` which needs to be in the root directory:

{

"presets": [

[

"@babel/env", {

"targets": {

"node": "current"

}

}

],

"@babel/preset-react"

],

"plugins": [

"syntax-dynamic-import",

"@babel/plugin-proposal-export-default-from",

"transform-react-remove-prop-types",

[

"transform-assets-import-to-string",

{

"baseDir": "/assets",

"baseUri": "https://DOMAIN_NAME"

}

]

]

}Regarding the babel last plugin specified there (transform-assets-import-to-string), I found it necessary to convert the direct image imports into the appropriate url requests. The Github repo for that plugin can be found here. The DOMAIN_NAME part is a placeholder for AWS CodeBuild so that one of the build steps (before running babel) can be sed -i “s/DOMAIN_NAME/$DomainName/g” .babelrc – that let’s the asset source url have the right url, which will be based on the git branch receiving the commit.

And the webpack.config.js:

const path = require('path')

const CleanWebpackPlugin = require('clean-webpack-plugin')

const HtmlWebpackPlugin = require('html-webpack-plugin')

const InlineChunkHtmlPlugin = require('react-dev-utils/InlineChunkHtmlPlugin')

const AssetsPlugin = require('assets-webpack-plugin')

const MiniCssExtractPlugin = require('mini-css-extract-plugin')

const prod = process.env.NODE_ENV === 'production';

const mode = prod ? 'production' : 'development';

const devtool = prod ? 'eval-cheap-source-map' : 'eval-source-map';

module.exports = {

mode,

entry: {

app: './src/client.js'

},

devtool,

// determines the name and place for your output bundles

output: {

filename: 'assets/main.[contenthash].js',

path: path.resolve(__dirname, 'public')

},

optimization: {

splitChunks: {

chunks: 'all',

maxInitialRequests: Infinity,

minSize: 0,

cacheGroups: {

vendor: {

test: /[\\/]node_modules[\\/]/,

name (module) {

// get the name. E.g. node_modules/packageName/not/this/part.js

// or node_modules/packageName

const packageName = module.context.match(/[\\/]node_modules[\\/](.*?)([\\/]|$)/)[1]

// npm package names are URL-safe, but some servers don't like @ symbols

return `npm.${packageName.replace('@', '')}`

}

}

}

}

},

plugins: [

// deletes the public folder for fresh builds

new CleanWebpackPlugin(['public']),

new HtmlWebpackPlugin({

title: 'Guacchain',

filename: 'index.html',

favicon: './src/favicon.ico'

}),

new InlineChunkHtmlPlugin(HtmlWebpackPlugin, [/\*.js/]),

new AssetsPlugin({

filename: 'public/webpackAssets.json'

}),

new MiniCssExtractPlugin()

],

// sets rules for processing different files being 'imported'

// (or loaded) into js files

module: {

rules: [

{

test: /\.jsx?$/,

exclude: /node_modules/,

use: [

{

// uses .babelrc as config

loader: 'babel-loader'

}

]

},

{

test: /\.css$/i,

use: [

{

loader: MiniCssExtractPlugin.loader,

options: {

esModule: true

}

},

'css-loader'

]

},

{

test: /\.(png|svg|jpg|gif)$/,

use: [

'file-loader',

]

},

]

},

resolve: {

extensions: ['.js', '.jsx']

}

}And the index.js:

require('@babel/register')

require('./src/server')Now inside the package.json, we need to add the npm scripts:

"scripts": {

"start": "npx webpack && cp public/webpackAssets.json src/webpackAssets.json",

"server": "node index.js",

"test": "echo \"Error: no test specified\" && exit 1",

"makeScriptsExecutable": "chmod +x ./scripts/buildServer.sh && chmod +x ./scripts/s3AssetDeploy.sh && chmod +x ./scripts/deployLambdaFunction.sh",

"build": "rm -rf build && mkdir -p build && cp -r src/* build/ && cd build && zip -r function.zip ./",

"build:server": "./scripts/buildServer.sh",

"deploy": "./scripts/s3AssetDeploy.sh && ./scripts/deployLambdaFunction.sh"

},With those npm scripts in place, we can proceed to create the `scripts` folder and add these three files inside of it:

builderServer.sh:

#!/usr/bin/env bash

rm -rf dist

npx babel build -d dist

mkdir -p dist/assets

cp -r public/assets/* dist/assets

cp src/webpackAssets.json dist/webpackAssets.json

cd dist

zip -r function.zip ./deployLambdaFunction.sh:

#!/usr/bin/env bash

aws lambda update-function-code --function-name $FUNCTION_NAME --zip-file fileb://dist/function.zipAnd s3AssetDeploy.sh:

#!/usr/bin/env bash

echo $S3_ASSETS_BUCKET_URI

aws s3 sync dist/assets $S3_ASSETS_BUCKET_URIBack in the terminal, running these commands:

npm run makeScriptsExecutable

$NODE_ENV=production npm run start

$NODE_ENV=production npm run build

$NODE_ENV=production npm run build:serverWill generate the build, dist and public folders that our scripts will then use to deploy them to the appropriate AWS resources. How will those resources get created? Well, that’s where CloudFormation comes into play.

CloudFormation

A CloudFormation stack is made from either a JSON or YAML template and is an example of Infrastructure as Code. I’ve only started using it recently within the past year but it has quickly become by far my favorite “meta” AWS service, since CloudFormation makes it extremely efficient to create new resources – no more button clicks in the web console necessary!

The CloudFormation template I made for this example has two prerequisites. The first is that you must have a registered domain with custom DNS so that we can let AWS Route 53 be responsible for the dynamic subdomains (which will eventually be added as new commits are made). The second is creating a Certificate through AWS Certificate Manager (ACM) which will allow us to map our API Gateway domains to the custom subdomains for convenience.

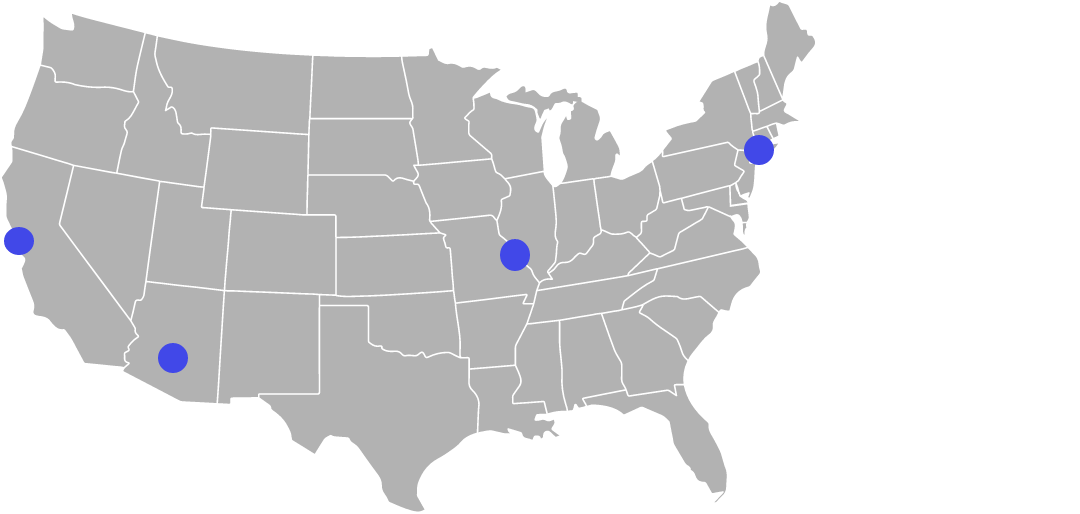

As for the custom DNS, the first step is creating a hosted zone for your domain name. After doing that, AWS will have a couple record sets already inside the hosted zone, one of them being of Type NS (name server). Opening that record shows a Value box with 4 different rows:

What I then did in my NameCheap web console was add those values to the Custom DNS section for my domain:

After doing that, the next prerequisite involves creating the certificate. For this, AWS Certificate Manager allows you to add domain names to the certificate being requested, and I added both guacchain.com as well as *.guacchain.com so that it can be valid for any subdomain.

As long as the Route 53 hosted zone was created beforehand, AWS is able to detect its presence (after choosing DNS validation) and gives the option to create the additional record sets for you.

Now we can finally get to the CloudFormation template. Because it is relatively length, here is a link to the one I used: https://gist.github.com/PatNeedham/5b3c4b68997b0375f27d402a056a6e1b

That template has four parameters:

- stackID – this is the unique identifier for the CloudFormation stack created by this template. The value will be defined in the CodeBuild project’s commands, as a combination of the domain name we are working with and the branch that received a commit.

- DomainName – this is needed for the API Gateway Domain name resource and the Route53 domain record set. It is a combination of the top level domain (tld) such as guacchain.com, along with any subdomain if there is one.

- route53HostedZoneId – this is a value that must be obtained inside the Route53 console page, where there is a “Hosted Zone ID” column towards the right:

Trying to copy it is actually quite tricky because after double clicking the text or highlighting it to select, a Details tab will pop up on the right side, making it a futile effort. But inside that tab, normal copying efforts should work as expected.

- CertificateArn – this one can be obtained on the AWS Certificate Manager console after expanding the certificate in question:

The fourth and final value that needs replacing (which I almost forgot about) is the lambdaFunction resource’s Layers value. I decided to include this so that when the Lambda function is created, there’s no need to include all the node_modules inside the deployment directly. This prevents all those dependencies from being included in the zip file, which makes it impossible to view the Lambda source code on the web console due to the source code size constraints. Here is the script I use to generate the Lambda Layer:

#!/usr/bin/env bash

rm -rf node_modules

rm -rf nodejs

npm install --production

mkdir -p nodejs

cp -r node_modules nodejs/

zip -r -q lambda-deps-layer.zip nodejs

aws lambda publish-layer-version \

--layer-name $LAMBDA_DEPS_LAYER_NAME \

--compatible-runtimes nodejs12.x \

--zip-file fileb://lambda-deps-layer.zip | aws lambda update-function-configuration --function-name $FUNCTION_NAME --layers $(jq -r '.LayerVersionArn')After placing that inside a /scripts/publishDepsLayer.sh file then running chmod +x ./scripts/publishDepsLayer.sh && LAMBDA_DEPS_LAYER_NAME=guacchain-deps-layer ./scripts/publishDepsLayer.sh, I do see it exists when viewing the Lambda Layers section of my AWS console:

The Version ARN listed there in your console can then be used to replace the Layer value tied to my account.

Creating the CloudFormation Stack

With those four replacements out of the way, it’s now time to create the CloudFormation stack. On the CloudFormation dashboard, you can choose Create stack, With new resources:

For AWS accounts without any existing stacks, the dashboard UI might look a little different but there should be a Create button displayed somewhere within sight. On that Create page, you can keep the “Template is ready” option selected, but for the Specify Template section, choose “Upload a template file” then select the yaml file with those replacements made. After doing so, an S3 URL gets generated. Copy that, as we’ll need it for the CodeBuild job.

CodeBuild

Now it’s time to tie all of these resources together with AWS Codebuild. The CodeBuild dashboard lets you create a new project, with these Source Provider options:

- Amazon S3

- AWS CodeCommit

- Github

- Bitbucket

- Github Enterprise

I selected Github and proceeded with the “Repository in my Github Account” option (requires connecting to GitHub using OAuth). For the next section I checked the “Rebuild every time a code change is pushed to this repository” checkbox underneath Webhook and chose all the event types except for PULL_REQUEST_MERGED. In the next section down titled Environment I chose these configurations:

- Environment image: Managed image

- Operating System: Amazon Linux 2

- Runtime(s): Standard

- Image: aws/codebuild/amazonlinux2-aarch64-standard:1.0

- Image version: Always use the latest image for this runtime version

- Privileged checkbox: left unchecked

- Service Role: I have an existing one called “CodeBuildServiceRole”. That role only has one attached policy: “AdministratorAccess”. And under Trust Relationships tab, the one entity value I have there is for “http://codebuild.amazonaws.com/”

- Additional configuration: I left unchanged.

- For Buildspec, this is where I chose Insert build commands and clicked “Switch to editor”, and here is what I have inputted there:

version: 0.2

phases:

install:

runtime-versions:

nodejs: 12

commands:

- curl -fsSL https://raw.githubusercontent.com/patneedham/aws-codebuild-extras/master/install >> extras.sh

- . ./extras.sh

pre_build:

commands:

- NODE_ENV=development npm install

- npm run makeScriptsExecutable

build:

commands:

- stackID="$CODEBUILD_GIT_BRANCH"

- BASE_NAME="prod"

- DomainName="${CODEBUILD_GIT_BRANCH}.${tld}"

- |

if [ "$productionBranch" = "$CODEBUILD_GIT_BRANCH" ]; then

DomainName=$tld

fi

- stackName="$tld-$stackID"

- stackNameFormatted="${stackName//.}"

- FUNCTION_NAME=$stackNameFormatted

- S3_ASSETS_BUCKET_URI="s3://$stackNameFormatted"

- TEMPLATE_URL=https://s3-external-1.amazonaws.com/cf-templates-1npj2t2ifo384-us-east-1/2020172oLP-stack2.yaml

- theLambda=$(aws cloudformation describe-stack-resources --stack-name $stackNameFormatted --logical-resource-id lambdaFunction || echo "")

- |

if [ -z "$theLambda" ]; then

# must create the stack

aws cloudformation create-stack --stack-name $stackNameFormatted --template-url $TEMPLATE_URL --parameters ParameterKey=stackID,ParameterValue=$stackNameFormatted ParameterKey=DomainName,ParameterValue=$DomainName ParameterKey=route53HostedZoneId,ParameterValue=$route53HostedZoneId ParameterKey=CertificateArn,ParameterValue=$CertificateArn --capabilities CAPABILITY_IAM

sleep 45

fi

- sed -i "s/DOMAIN_NAME/$DomainName/g" .babelrc

- NODE_ENV=production npm run start

- NODE_ENV=production npm run build

- NODE_ENV=production npm run build:server

- NODE_ENV=production npm run deployWithin the phases.install.commands section towards the beginning, I’m running a curl command to access a third party script (originally found here, then I forked it for security reasons) to access the name of the branch that the commit triggering the build was pushed to. Surprisingly, the CODEBUILD_SOURCE_VERSION environment variable does not work for projects using Github as the source repository. That third party script provides the CODEBUILD_GIT_BRANCH environment variable within the phases.build.commands section to define the stackID.

Viewing list of active/inactive environments

Now that a new environment is made for every new branch added to the repository, it’s a good time to understand which ones are being used and how many there are in total. To accomplish this, we will rely on Cloudwatch Logs (to detect which of the lambda functions connected to our environments) along with the AWS CLI to list all of the lambda functions associated with those created environments. Finding the CLI equivalent of viewing logs in the web console became more complicated than I had originally thought. The way I typically go about doing that in the web console would be to first click on the Monitoring subtab on the Lambda function’s page:

That opens up a new tab showing a table of log streams. Only after clicking the top-most row in that table does it finally show the details of event-level logs (when the Lambda function was invoked, its duration and memory consumption, any console.logs or error messages found in the code, etc):

There are two corresponding steps to access this via the CLI. The first one is using the describe-log-streams command. I was able to get a JSON representation of that table of log streams by running aws logs describe-log-streams –log-group-name /aws/lambda/guacchaincom-master.

For the log-group-name argument, I copied what appears towards the top of the CloudWatch page:

The returned JSON contained many log streams, far more than what initially appears on the web console. That must be because of the default date/time filtering (maybe the web console only displays log groups for the past 24 hours). The last element in the JSON array returned by the CLI command is the most recent one, and it contains fields such as that log stream’s name as well as first and last event timestamps.

Updating and deleting environments

Going back to the CodeBuild commands included in the buildspec I want to highlight two operations in particular:

- theLambda=$(aws cloudformation describe-stack-resources --stack-name $stackNameFormatted --logical-resource-id lambdaFunction || echo "")

- |

if [ -z "$theLambda" ]; then

# must create the stack

aws cloudformation create-stack --stack-name $stackNameFormatted --template-url $TEMPLATE_URL --parametersThe first one creates a variable (theLambda) based on the result of that CLI command. If a CloudFormation stack with that name already exists, it proceeds to access the ID of the included lambda function. Otherwise the command fails, leading to echo “”. That variable is used inside the if statement to check whether it’s empty. Whenever that’s the case, and only when that’s the case, CodeBuild proceeds to execute the create-stack command. This ensures that for every subsequent commit pushed to a branch after the first one, it will use the existing S3 bucket and lambda function connected to that stack instead of creating a brand new one. That in turn leads to much faster build times since there is no longer any need to wait for the stack to get created.

As for deleting environments, ideally I would like the deletion to be a direct consequence of a branch on the Github repository being deleted. Accomplishing that would require updating the buildspec to detect when that action happens.

Extending beyond one repository per application

As a web application grows, it becomes more practical to break out its components and/or pages into separate repositories. That lets a developer who might only want to make updates on a particular page have the option of making a branch on a dedicated repository for that page, instead of the whole application. The App.jsx file references in an earlier section of this article included

“`

import Header from ‘./Header’;

import Home from ‘./pages/Home’

import About from ‘./pages/About’

“`

However with those components living in separate repositories, one would have to change those relative import statements to indicate where those components are coming from. Suppose we want to put the header component into its own repository – there is a way to install it from that repo and name the module whatever we want. It doesn’t matter if there already is a published npm package with the same name. As long as there are no plans to publish it to npm, the name can be anything.

To replace that local Header component with a module fetched from Github, I can do something like `npm install –save header@git+https://<token-from-github>:x-oauth-basic@github.com/<user>/<GitRepo>.git#<BranchOrTag>`. The <token-from-github> part can be replaced with a custom token created on https://github.com/settings/tokens.

Future follow up improvements to be made

This was mainly an in-depth exploration into the vast offerings of AWS, and thus has plenty of areas that can be improved upon. For example, the CodeBuild build commands in use rely on a “sleep 45” step in between “aws cloudformation create-stack” and the npm scripts. That was an arbitrary amount of time I found useful to let the underlying resources get created before the deployments (code into the Lambda function and assets into the S3 bucket) took place. Maybe that create-stack command can be placed before npm install step which would allow that 45 second amount to be reduced.

Another area I think can become more seamless is the prerequisites – creating the Route 53 hosted zone, setting up the custom DNS, requesting the ACM certificate, setting up the CodeBuild project, etc. I believe those can be wrapped up into a sort of “meta” function that takes place in a dedicated Lambda, such that you give it the domain name to work with, the Github repository url to link to the CodeBuild project, and the NS records are given back to you.

Also, one improvement I plan on making sooner than the one stated above is adding the option for “stable” environments that stay connected to a particular branch rather than a commit ID. That way, there can be a mapping between branch names and their corresponding CloudFormation stacks. So whenever a new commit is added, CodeBuild can first check whether an environment already exists for that branch, and if so, simply update the S3 bucket and Lambda function instead of creating a new stack from scratch. And CodeBuild appears to support the various pull request events:

Final Thoughts

What I’ve learned through this whole process is that AWS is an enormous, seemingly endless ecosystem. I only scratched the surface of its vast offerings, but hopefully set a path for further exploration. One concern worth bringing up is that of vendor lock-in. Ideally I’d like to set up a system like I’ve done here on Azure and/or Google Cloud as well, to get a better understanding of how they compare against each other when it comes to integrating the various pieces.

Great job Pat!! Excellent read! Will share with colleagues