Pretty URLs

Angular, by default, is configured to use hashbangs (#!) to denote a new page or state of the site/application. Url’s with a hashbang aren’t technically considered new pages by site crawlers. These type of urls are partials/fragments and are generally bad for SEO. Crawlers don’t index the content of fragments, by design, since they’re not technically considered a full page.

However, google is claiming that “we’ll generally crawl, render, and index the #! URLs”, from a blog post dated Oct 2015. This announcement doesn’t come as a complete surprise, since googlebot is chrome, but it still is a relatively new feature. There are mixed reports online about whether or not it actually works and regarding how long it actually takes for it to index the page(s). Some people are reporting up to two weeks to get indexed. This is, of course, a google only feature and consideration for other search engines should be made.

Fortunately, there is a relatively simple fix for the dreaded hashbang URLs – HTML5 History API – and Angular provides us with an easy way of leveraging this API with minimal effort. Instructions on how to convert an already existing application can be found here.

Internal anchor links need to be converted from the hashbang format so that they can be read by the crawler. Links that use ng-click to change state should also be converted to use ng-href.

Pre-rendering

The best practice previously proposed by google webmasters blog was to use escaped fragments to serve pre-rendered stateless versions of dynamic pages. This has since been retracted as a best practice in favor allowing google to fetch js and css resources via the robots.txt file so that the page can be natively rendered by their crawlers.

Other crawlers are most likely not as sophisticated as google’s so there is probably still a need to serve pre-rendered pages to those bots. However, it should be noted that serving a crawler a different version of a site might be considered “cloaking”, which is generally very frowned down upon by search engines.

There are several paid 3rd-party services that provide pre-rendering for SPAs: seo.js, brombone and seo4ajax are among the most popular ones. There is also an open-source self-hosted solution, prerender.io, which might be worth looking into.

<meta type=”data” />

If google does in fact render dynamic JS pages with their crawlers then it would be safe to assume that modifying theandtags with JS would suffice.

There is an angular module that can handle meta tag content switching which supports ngRoute and simple states in ui-router. It also supports Facebook Open Graph Protocol and Twitter Cards.

Almost all online resources also mention that a sitemap.xml would be greatly beneficial for SEO, since the bots will first follow all those links before blindly crawling around the site. Care needs to be taken to make sure that there are no dead links within the sitemap as that can lead to a negative impact on SEO.

Conclusion

While google’s new JS site crawler sounds like it’s the holy grail of SPA+SEO, there are still some steps that need to be taken in order for the crawlers to be able to do their jobs.

- Pretty URL’s both as anchor hrefs and in the URL bar

- Allow JS and CSS resources to be fetched via robots.txt

- Include a sitemap.xml

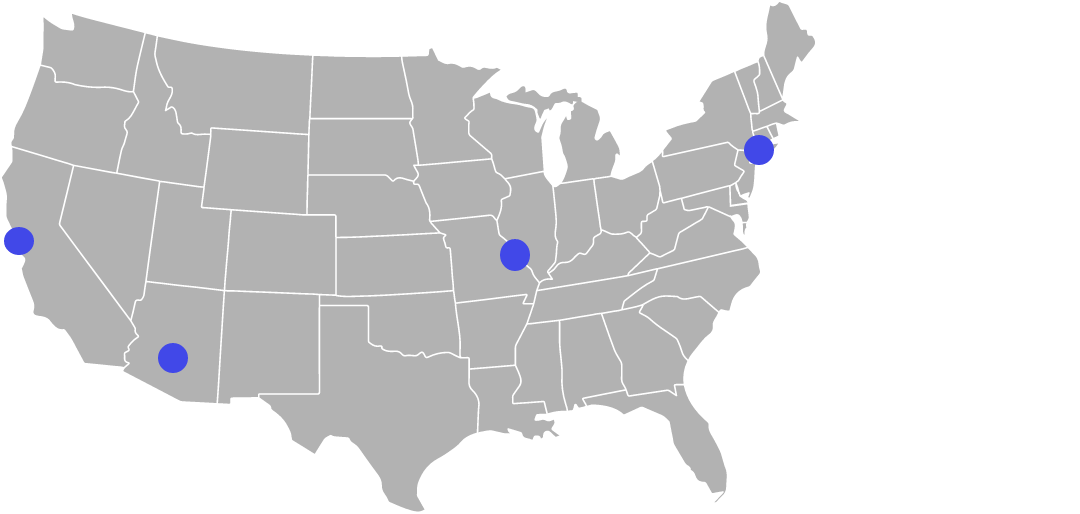

Google does hold >65% of the search traffic for the U.S. so there is still a fairly large (35%) gap regarding Bing, Yahoo and Yandex crawlers and whether or not they’re able to render and properly index SPA’s. Since Yahoo is powered by Bing and Bing tends to follow behind google pretty closely, it’d be safe to assume Bing/Yahoo will have SPA indexing capabilities very soon, if not already.

FaceBook, Twitter and other social media sites also employ some sort of site crawlers to enable rich social sharing, those bots almost certainly do not render JS.

Ultimately, the most optimal solution is a mixture of serving certain bots the user facing site while others get a pre-rendered stateless version which uses the escaped fragments solution.

—

Written by InRhythm Engineer Vitaly Isikov

Thanks for the excellent information, it really is useful.

I needed to thank you for this great read!! I undoubtedly appreciating

every small touch of it I have you bookmarked to take a

look at new material you post.

Pretty! This was a truly excellent post. Thank you for your supplied advice

I love it when folks come together and share opinions, great

blog, keep it up.